Early fall detection from video using 3D-CNNs

Code and initial results coming soon

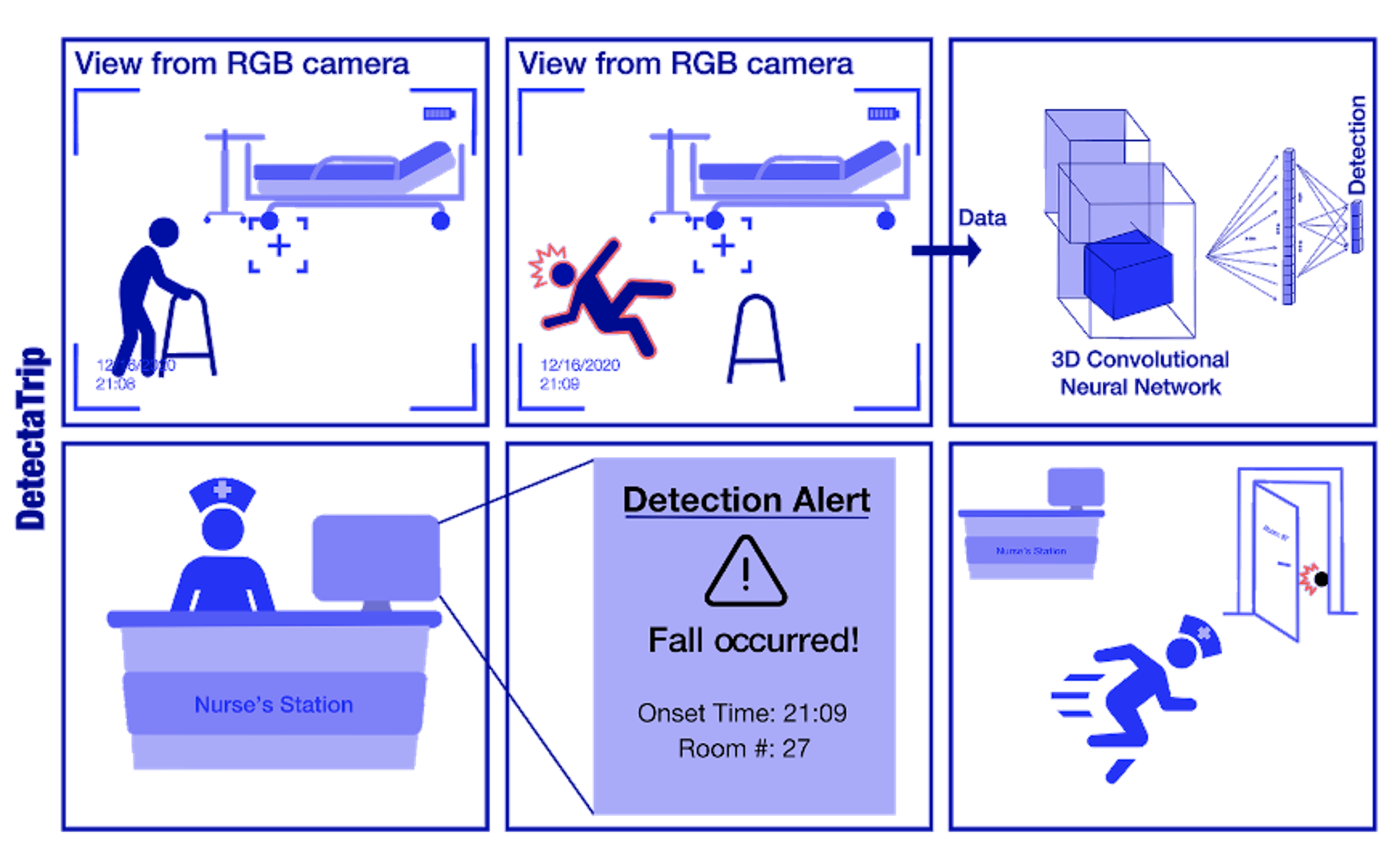

According to the CDC, along with the aging population in the United States, the fall related fatality rate is increasing - up 30% from 2007 to 2016. Over 3 million elderly people are treated in emergency departments each year as a result of fall related injuries. Accurate and real-time detection of falls is vital to timely intervention and minimization of long term effects. Current state-of-the-art focuses on video- and sensor-based detection systems. Video based monitoring is ubiquitous in hospitals and elderly care homes where fall detection applications can be most helpful. Our work aims to localize falls in RGB video feed.

Approach: 3D-CNNs

The availability of fall datasets is extremely limited. Existing simulated datasets do not accurately capture falls “in-the-wild” as falls are unexpected actions and therefore difficult to simulate. However, action recognition is a well studied field with many open-sourced datasets. Therefore, we implemented a transfer learning approach using a 3D-ResNet architecture pretrained on the Kinetics-700 action recognition dataset and performed supervised fine-tuning on fall datasets.

Demo

A demo of our model performance can be seen below. The outer border represents the label (Fall vs. No Fall) at each timestep while the inner border represents our prediction (Green = No Fall, Red = Fall).

Awards